A groundbreaking new system combines sight and touch, allowing machines to handle complex objects with unprecedented dexterity. This could be the key to bringing truly helpful robots into our homes and workplaces.

Think about the last time you picked up a cup of coffee. You saw it, reached for it, and your fingers automatically adjusted their grip the moment they made contact. You judged its weight, its temperature, and the texture of the ceramic without a single conscious thought. This seamless fusion of sight and touch is a marvel of biological engineering, something we humans do effortlessly. For artificial intelligence, however, replicating this seemingly simple act has been a monumental challenge.

For years, robotic systems have relied primarily on vision. Cameras act as their eyes, allowing them to identify objects, calculate distances, and plan movements. This vision-only approach has powered incredible advances, from factory automation to autonomous vehicles. But it has a significant blind spot: it lacks the nuanced, real-world feedback that comes from the sense of touch. A robot can see a piece of Velcro, but can it tell the difference between the soft, loopy side and the stiff, hook-covered side just by looking? Can it feel the subtle click of a zip tie locking into place? Relying solely on vision is like trying to button a shirt with thick gloves on—you can see what you need to do, but you lack the crucial sensory information to do it well.

This is where the limitations of current physical AI become clear. Tasks that require distinguishing textures, judging adhesiveness, or confirming a secure connection are often beyond the capabilities of vision-only robots. This has been a major roadblock preventing robots from becoming the versatile, everyday helpers we often see in science fiction.

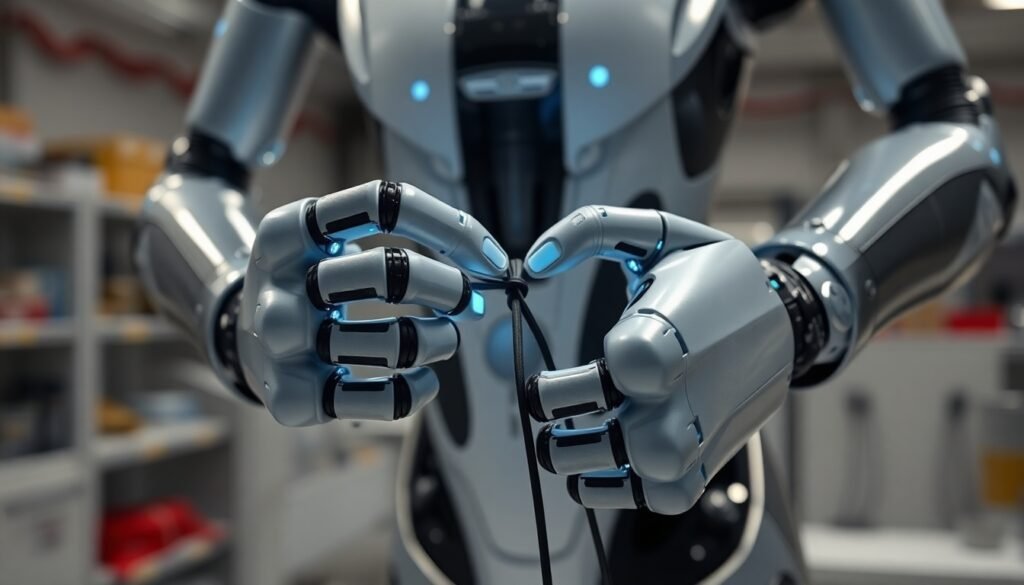

To overcome this challenge, an international team of researchers from Tohoku University, the Hong Kong Science Park, and the University of Hong Kong has developed a groundbreaking new approach. Their system, aptly named “TactileAloha,” enhances a sophisticated robotic platform with the one sense it was critically missing: touch.

The foundation of their work is a system called ALOHA (A Low-cost Open-source Hardware System for Bimanual Teleoperation), developed at Stanford University. ALOHA is a dual-arm robotic system that can learn complex tasks by having a human operator teleoperate it, essentially teaching it by demonstration. The research team took this powerful platform and integrated high-fidelity tactile sensors onto the robot’s grippers. This allows the robot not just to see an object, but to feel it.

The magic happens when this new tactile data is combined with the existing visual and proprioceptive (the robot’s awareness of its own limbs’ positions) information. The team employed a sophisticated AI model known as a vision-tactile transformer. This model acts like a central processing unit, taking in the streams of data from all the different senses and learning how they relate to each other. It learns to associate the visual appearance of an object with its tactile properties, allowing it to make more intelligent and adaptive decisions. In essence, the AI learns to “feel” its way through a task, much like a human does.

To prove the effectiveness of TactileAloha, the researchers designed two experiments that are deceptively difficult for a robot: fastening a strip of Velcro and inserting and tightening a zip tie. Both tasks are heavily reliant on tactile feedback. With Velcro, the robot needs to identify the hook and loop sides and press them together with the correct orientation and force. With the zip tie, it must feel for the correct orientation to insert the tail into the locking mechanism and then pull with enough force to secure it.

When a vision-only system attempted these tasks, it struggled and often failed. It couldn’t reliably distinguish the sides of the Velcro or confirm the zip tie was properly aligned. TactileAloha, however, performed with remarkable success. By feeling the textures, the robot could adapt its strategy in real-time, correcting its grip and applying appropriate force. The results were not just a marginal improvement; the study reports that TactileAloha achieved an average relative improvement of approximately 11% compared to even other state-of-the-art methods that use tactile input, demonstrating its superior performance.

“This achievement represents an important step toward realizing a multimodal physical AI that integrates and processes multiple senses such as vision, hearing, and touch – just like we do,” explains Mitsuhiro Hayashibe, a professor at Tohoku University and a key member of the research team.

The implications of this breakthrough are vast. By giving robots a more holistic and human-like way of sensing the world, TactileAloha paves the way for machines that can perform a wide array of everyday tasks. Imagine robots that can assist the elderly with delicate tasks, help with intricate cooking procedures, or perform complex assembly in manufacturing with greater reliability. This research moves us one significant step closer to a future where robotic helpers are not just a novelty, but a seamless and helpful part of our daily lives, able to navigate the physical world with a dexterity that was once purely the domain of biology.

Reference

Hayashibe, M., et al. (2025). TactileAloha: Learning Bimanual Manipulation with Tactile Sensing. IEEE Robotics and Automation Letters.